As Professor Masur mentioned in his February post, the History Department has started an intermittent lecture series to “reach out to the campus community and give students a chance to learn about history outside of the classroom.” On March 25, it was Professor Dubrulle’s turn to deliver a talk about what he calls “The 5th New Hampshire Project” and some of the research he’s done recently to support a student project about the life outcome of 5th New Hampshire veterans after the Civil War was over. What follows is a shortened version of what he discussed.

The Origins of The 5th New Hampshire Project

I started the so-called “5th New Hampshire Project” (so-called by me) in the summer of 2017 when I knew that I’d be teaching History 352: The Civil War and Reconstruction in the spring of 2018. I wanted to create a New Hampshire-focused research project for students in that class. I eventually settled on the 5th New Hampshire Volunteer Infantry as an object of study for two reasons. First, it is notorious for having suffered more combat fatalities than any other regiment in Union service during the Civil War. Second, although it was by no means a “typical regiment,” its experiences provide an ideal vehicle for exploring a wide variety of topics associated with the war. I eventually amassed a large collection of primary and secondary sources associated with the 5th New Hampshire so my students could research a number of different subjects.

As time went on, though, I realized that the project could do more than merely support the students in my Civil War course. For one thing, it could provide other students with opportunities to do research projects great and small. For another, as maintaining this collection and overseeing student research became more time-consuming, I realized that it would be increasingly difficult to keep a separate research agenda of my own. So I started thinking about making the 5th New Hampshire the subject of my next book. As it stands now, my general idea is that the book will use the regiment to explore various dimensions of the Civil War soldier’s experience. These dimensions would include subjects like recruitment (which itself would cover issues like the draft, substitution, and the use of immigrants), military leadership, discipline, the experience of combat, tactics, desertion, medical care, politics, relations with the home front, and so on. The plan also envisions tracing the experiences of men in the regiment from the antebellum period all the way through to their lives as veterans. Throughout, I will rely on the latest historiography to illuminate these experiences to produce a book that could be used in undergraduate courses.

Student Research and The 5th New Hampshire Project

So far, this project had relied on student research, and I hope to continue that tradition in the future. Back in the fall of 2017, the department kindly allocated four research assistants to assist me. Two of them, Caitlin Williamson ’19 and Lauren Batchelder ’18, transcribed soldiers’ letters for students’ use. Two others, Greg Valcourt ’19 and William Bearce ’19, took soldiers’ abbreviated service records that appeared in The Revised Register of the Soldiers and Sailors of New Hampshire in the War of the Rebellion. 1861-1866 (1895) and transferred them onto a sortable, searchable Excel file. This work was enormously helpful for me and the students in the Civil War class. Later, Josh Pratt ’22 transcribed more letters, Emma Bickford ’22 used various records to tabulate the casualties the 5th New Hampshire suffered at Antietam and figure out what happened to these men later on.

In addition, several students have approached me asking to use the material for larger research projects. Emily Lowe ’19 obtained a summer honors research fellowship in 2018 so she could use the regiment as a case study in the treatment of combat trauma. And Katherine Warth ’21 has approached me about doing a statistical study of the life outcomes of veterans of the 5th New Hampshire. It’s this last project I’d like to spend the remaining time discussing.

Veterans, Trauma, and the 5th New Hampshire

To quote Benedetto Croce, “All history is contemporary history.” Among scholars, interest in the experiences of Civil War veterans has really taken off in recent years. That interest probably has something to do with where the United States finds itself ourselves today; as a result of the two wars we’ve recently fought, we have large numbers of veterans with recent combat experience, and the American public seems especially aware of these veterans’ difficulties in adjusting to civilian life. So Katherine’s interests are congruent with those of contemporary scholars.

Using the 5th New Hampshire for this kind of study is especially interesting because the regiment lost a great number of men due to illness and combat. It suffered large numbers of casualties at five important battles: Fair Oaks, Antietam, Fredericksburg, Gettysburg, and Cold Harbor. As the chart below indicates, of the original 1000 volunteers, very few emerged from the war unscathed.

Figure 1. (click for larger image): These figures cover the original thousand-some-odd volunteers who were mustered in around the middle of October 1861. The numbers are based on an Excel spreadsheet that was compiled using The Revised Register of the Soldiers and Sailors of New Hampshire in the War of the Rebellion. 1861-1866 (1895). The men mustered out in June 1862 belonged to the regimental band; they were sent home after the Seven Days’ Battles. Those mustered out in October 1864 had completed their three-year term of service and had not re-enlisted. Note that 30% of the original volunteers did not survive the war. Moreover, almost half of them received disabled discharges due to wounds or illness.

For a variety of reasons, I think these numbers, which come from the Revised Register, undercount the number of casualties. Whatever the case, the question Katherine and I have is, what effect did this kind of physical and psychological trauma have on veterans’ lives after the war?

The Data and the Sample

First we had to figure out how to go about doing a study of this sort. Over the course of the war, 2500 men served in the 5th New Hampshire, and we just don’t have the man- or woman-hours to look through all of their lives, so we had to make our pool of soldiers manageable. I decided to that we ought to look at the original 1000 volunteers. First, it was a good way of limiting our task and, second, this group would be easier to trace than the substitutes who flooded the regiment in 1863 and after (many of whom were foreign-born and many of whom deserted).

I then asked Professor Tauna Sisco in the Sociology Department, the Queen of Statistics, how big of a pool I would need to get a representative sample. She said 300. So I decided I’d have to select every second man on an alphabetical list, knowing I’d have to skip a large number who died in the service (roughly 270). I’m happy to report that as of the date of this talk, I’ve collected biographical information on 100 men, and I have some preliminary findings to share. At the rate I’m going, I’ll end up looking at about 380 men.

You might well ask, what kind of data are you using, and how do you get access to it? I’ve got a free Family Search account, and using that, I can find the following documents: census records, enlistment papers, pension index cards, pension payment forms, marriage records, birth records, records of town payments to the families of soldiers during the war, death records and certificates, records from the National Homes for Disabled Volunteer Soldiers, and so on. I can’t find every type of record for every man, but I’ve been able to piece together pretty decent biographies on just about everyone.

For this talk, I mined the biographies for three types of information: lifespan, cause of death, and crude social mobility as measured by occupation upon enlistment versus terminal occupation. I have far more information than that, but I thought these three topics would be interesting. The following, then, are raw data coupled with some very sketchy hypotheses. And, of course, more questions.

The first question worth asking is: “How representative is our sample so far?” The sample seems fairly representative of the regiment. To name one example, the percentage of native-born Americans among the original 1000 volunteers was 88%; in my sample, it’s 92%. And as you can see from the graphic below, in some important ways, the sample actually seems representative of Northern soldiers in general. For those who are interested, by the way, the average age of my sample upon enlistment was 24, and the average height was 5’8”, both of which are pretty much in keeping with Northern norms.

Life Outcomes: Lifespan

So let us take a look at lifespan. The average lifespan of the men in my sample was 65.6 years. It’s hard to understand the significance of that figure. There is a debate among demographers over the life expectancy of the Civil War generation, so I can’t quite place this figure. On the one hand, it sounds impressive when you take into account that almost two-fifths of these men had been shot and around half of them had obtained a disabled discharge from the army. On the other, it doesn’t sound quite as impressive when you consider that life expectancy for men in this period was dragged down largely by infant mortality—if you made it to 20, you had a good chance of living to your 60s.

We should remember in this context, too, that lifespan is only a very crude measure of health. It says nothing about the quality of life. The Veterans Census of 1890 reveals a number of veterans then in their 50s living with painful old wounds or chronic illnesses contracted in the army.

Life Outcomes: Death

And that brings us to death—what killed these men, and what do their deaths say about their lives? Out of the 100, I found 61 causes of death.

We’d have to compare this list to normal causes of death at the turn of the century, but several things stand out. The number of deaths related to alcohol looks rather high. It’s hard to nail down a precise figure because in addition to the veterans who clearly died from the effects of alcoholism, you have a number who may have died of diseases associated with the overconsumption of alcohol or expired under circumstances that may lead one to think they were alcoholics (e.g. deaths from liver cancer, congestion of the liver, a five-day drinking spree, and so on). The number of suicides also seem fairly high, and I have a sense that such causes of death may have been underreported.

It is interesting to see the number of old people’s diseases on this list—a reflection of the fact that a fair proportion of the sample died in old age (38 of the 90 men for whom I found both birth and death dates lived past the age of 70).

Life Outcomes: Social Mobility

Before you die, you do things like hold a job down, and that job says something about how successful you are. In 96 cases, I found the occupation of enlistees in 1861, and for 81 of those men, I located information about the last job they held before they retired or died.

Figure 2. (click for larger image): The majority of the occupations listed on this chart were self-reported on enlistment forms in 1861. The US Sanitary Commission figures come from a survey that was conducted among over 600,000 Northern soldiers during the war. There are some very good matches between the sample of men from the 5th New Hampshire and the US Sanitary Commission Figures (e.g see farmers).

Of the 81 cases where I found sufficient information to make a judgment, in only 8 cases did a veteran experience downward social mobility. Overall, if we look at the question broadly (that is, what percentage of men fit in which general category) there appears to be palpable positive social mobility. It’s hard to say what these results indicate. To what extent are changes in occupation a matter of one’s doing and to what degree are they a function of a changing economy? And how much of this outcome was influenced by the war experience? Part of the problem is that we have no control group; an entire generation of Northern men served in the war, so it’s hard measure the veterans of the 5th New Hampshire against other men of the same age. But some economic historians have controlled for this type of problem, and we’ll have to see how they did it.

Figure 3. (click for larger image): These pie charts compare the occupations of enlistees in 1861 with the terminal occupations of veterans after the war. Note the degree to which the proportion of professionals and owners of capital increased—from under 30% to just over half. Notice too that the proportion of unskilled/semi-skilled laborers fell from almost 45% to under 30%. In general, veterans of the 5th New Hampshire enjoyed upward social mobility, but how did it compare with Northern men as a whole during this period?

Conclusion

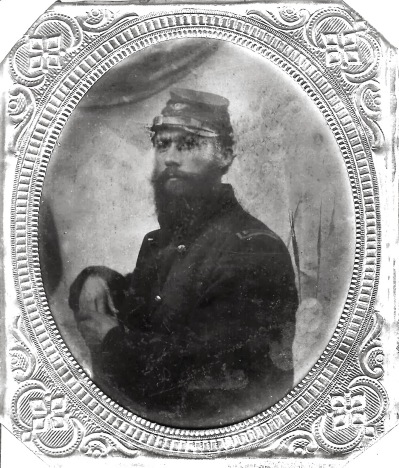

There are a lot of problems with looking at life outcomes statistically. Statistics can only tell you about correlations, not causes. Causes have to be determined on an individual level—and even then, the case is difficult. Moreover, if we are determined to look at veterans’ post-war experiences through the lens of war trauma, we run the risk of suffering from the worst kind of confirmation bias. Statistics cannot tell the whole story—they always must be supplemented by other evidence (such as, say, the letters of James Larkin, pictured above, who worked his way up from 1st Lieutenant in Company A to Lieutenant Colonel of the 5th New Hampshire; photo courtesy of David Morin). So as Katherine and I forge ahead on this project, we will look at more primary and secondary sources to shed light on the statistical analysis of veterans’ experiences.